Adversarial Machine Learning Poses a New Threat to National Security

Imagine the following scenarios: An explosive device, an enemy fighter jet and a group of rebels are misidentified as a cardboard box, an eagle or a sheep herd. A lethal autonomous weapons system misidentifies friendly combat vehicles as enemy combat vehicles. Satellite images of a group of students in a schoolyard are misinterpreted as moving tanks. In any of these situations, the consequences of taking action are extremely frightening. This is the crux of the emerging field of adversarial machine learning.

The rapid progress in computer vision made possible by deep learning techniques has favored the large diffusion of applications based on artificial intelligence (AI). The ability to analyze different kinds of images and data from heterogeneous sensors is making this technology particularly interesting for military and defense applications. However, these machine learning (ML) techniques were not designed to compete with intelligent opponents; therefore, their own characteristics that make them so interesting also represent their greatest risk in this class of applications. More precisely, a small perturbation of the input data is enough to compromise the accuracy of the ML algorithms and render them vulnerable to the manipulation of adversaries, hence the term adversarial machine learning.

Adversarial attacks pose a tangible threat to the stability and safety of AI and robotic technologies. The exact conditions for such attacks are typically quite unintuitive for humans, so it is difficult to predict when and where the attacks could occur. And even if one could estimate the likelihood of an adversarial attack, the exact response of the AI system can be difficult to predict, leading to further surprises and less stable, less safe military engagements and interactions. Despite this intrinsic weakness, the topic of adversarial ML in the military industry has remained underestimated. The case to be made here is that ML needs to be intrinsically more robust to make good use of it in scenarios with intelligent and adaptive opponents.

AI is a rapidly growing field of technology with potentially significant implications for national security. As such, the United States and other nations are developing AI applications for a range of military functions. AI research is underway in intelligence collection and analysis, logistics, cyber operations, information operations, command and control, and in a variety of semiautonomous and autonomous vehicles. Already, AI has been incorporated into military operations in Iraq and Syria. AI technologies present unique challenges for military integration, particularly because the bulk of AI development is happening in the commercial sector. Although AI is not unique in this regard, the defense acquisition process may need to be adapted for acquiring emerging technologies like AI. In addition, many commercial AI applications must undergo significant modification prior to being functional for the military.

For a long period of time, ML researchers’ sole focus was improving the performance of ML systems (true positive rate, accuracy, etc.). Nowadays, the robustness of these systems can no longer be ignored; many of them are highly vulnerable to intentional adversarial attacks. This fact renders them inadequate for real-world applications, especially mission-critical ones.

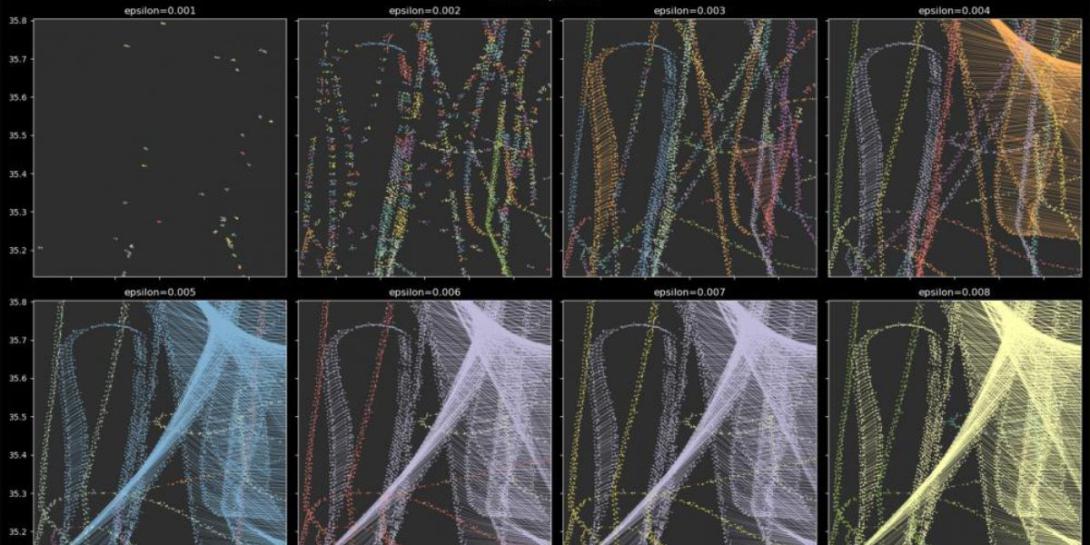

An adversarial example is input to an ML model that an attacker has intentionally designed to cause the model to make a mistake. In general, the attacker may have no access to the architecture of the ML system, which is known as a black-box attack. Attackers can approximate a white-box attack by using the notion of “transferability,” which means that an input designed to confuse a certain ML model is able to trigger a similar behavior within a different model. This was demonstrated in many instances by a team of researchers at the U.S. Army Cyber Institute.

General concerns about the impacts of adversarial behavior on stability, whether in isolation or through interaction, have been emphasized by recent demonstrations of attacks against these systems. Perhaps the most widely discussed attack cases involve image classification algorithms that are deceived into “seeing” images in noise or are easily tricked by pixel-level changes, so they classify a school bus as an ostrich, for example. Similarly, gameplaying systems that outperform any human (e.g., Chess or AlphaGo) can suddenly fail if the game structure or rules are slightly altered in ways that would not affect a human. Autonomous vehicles that function reasonably well in ordinary conditions can, with the application of a few pieces of tape, be induced to swerve into the wrong lane or speed through a stop sign. This list of adversarial attacks is by no means exhaustive and continues to grow over time.

Although AI has the potential to impart a number of advantages in the military context, it may also introduce distinct challenges. AI technology could, for example, facilitate autonomous operations, lead to more informed military decision making and increase the speed and scale of military action. However, it may also be unpredictable or vulnerable to unique forms of manipulation. As a result of these factors, analysts hold a broad range of opinions on how influential AI will be in future combat operations. While a few analysts believe that the technology will have minimal impact, most believe that AI will have at least an evolutionary, if not revolutionary, effect.

U.S. military forces utilize AI and ML to improve and streamline military operations and other national security initiatives. Regarding intelligence collection, AI technologies have already been incorporated into military operations in Iraq and Syria, where computer vision algorithms have been used to detect people and objects of interest. Military logistics is another area of focus in this realm. The U.S. Air Force is using AI to keep track of when its planes need maintenance, and the U.S. Army is using IBM’s AI software “Watson” for predictive maintenance and analysis of shipping requests. Defense applications of AI also extend to semiautonomous and autonomous vehicles, including fighter jets, drones or unmanned aerial vehicles, ground vehicles and ships.

One might hope that adversarial attacks would be relatively rare in the everyday world since “random noise” that targets image classification algorithms is actually far from random.

Unfortunately, this confidence is almost certainly unwarranted for defense or security technologies. These systems will invariably be deployed in contexts where the other side has the time, energy and ability to develop and construct exactly these types of adversarial attacks. AI and robotic technologies are particularly appealing for deployment in enemy-controlled or enemy-contested areas since those environments are the riskiest ones for human soldiers, in large part because the other side has the most control over the environment.

As an illustration, Massachusetts Institute of Technology (MIT) researchers tricked an image classifier into thinking that machine guns were a helicopter. Should a weapons system equipped with computer vision ever be trained to respond to certain machine guns with neutralization, this misidentification could cause unwanted passivity, creating a potentially life-threatening vulnerability in the computer’s ML algorithm. The scenario could also be reversed, in which the computer misidentifies a helicopter as a machine gun. On the other hand, knowing that an AI spam filter tracks certain words, phrases and word counts for exclusion, attackers can manipulate the algorithm by using acceptable words, phrases and word counts and thus gain access to a recipient’s inbox, further increasing the likelihood of email-based cyber attacks.

In summary, AI-enabled systems can fail due to adversarial attacks intentionally designed to trick or fool algorithms into making a mistake. These examples demonstrate that even simple systems can be fooled in unanticipated ways and sometimes with potentially severe consequences. With the wide range of adversarial learning applications in the cybersecurity domain, from malware detection to speaker recognition to cyber-physical systems to many others such as deep fakes, generative networks, etc., it is time for this issue to take central stage as the U.S. Defense Department is increasing its funding and deployment into fields of automation, artificial intelligence and autonomous agents. There needs to be a high level of awareness when it comes to the robustness of such systems before deploying them in mission-critical instances.

Many recommendations have been offered to mitigate the dangerous effects of adversarial machine learning in military settings. Keeping humans in or on the loop is essential in such situations. When there is human-AI teaming, people can recognize that an adversarial attack has occurred and guide the system to appropriate behaviors. Another technical suggestion is adversarial training, which involves feeding an ML algorithm a set of potential perturbations. In the case of computer vision algorithms, this would include images of the stop sign that displays those strategically placed stickers or school buses that include those slight image alterations. That way, the algorithm can still correctly identify phenomena in its environment despite an attacker’s manipulations.

Given that ML in general, and adversarial ML in particular, are still relatively new phenomena, the research on both is still emerging. As new attack techniques and defense countermeasures are being implemented, caution needs to be exercised by U.S. military forces in employing new AI systems in mission-critical operations. As other nations, particularly China and Russia, are making significant investments in AI for military purposes, including in applications that raise questions regarding international norms and human rights, it remains of utmost importance for the United States to maintain a strategic position to prevail on future battlefields.

Dr. Elie Alhajjar is a senior research scientist at the Army Cyber Institute and jointly an associate professor in the Department of Mathematical Sciences at the United States Military Academy in West Point, New York, where he teaches and mentors cadets from all academic disciplines. His work is supported by grants from the National Science Foundation, the National Institutes of Health, the National Security Agency and the Army Research Laboratory, and he was recently named the Dean’s Fellow for research.

Comments