The Eyes Have It: Novel Eye-Tracking Tech Offers Behavior and Cognition Insights

High-speed eye-tracking technology may one day give military members, cyber warriors, veterans and many others continuous insight into biometrics related to stress and cognitive load. Researchers behind the Pupil-Light prototype envision the technology becoming a widespread tool for supporting research of cognitive and behavioral states, including attention, stress and emotional regulation.

The system is currently being developed as a research and performance tool with clinical applications as a long-term goal. The prototype looks like ordinary black-rimmed glasses, except for a few pieces of metallic hardware along the bridge and inside the frames. It uses a unique eye-tracker that combines biomedical engineering and neuroscience to support research into the neural networks that regulate human behavior, according to a University of North Carolina-Chapel Hill article. Unlike traditional eye trackers that rely on cameras and video processing, Pupil-Light uses a compact, camera-free system that detects subtle changes in pupil size and eye movement by converting light signals directly into measurements.

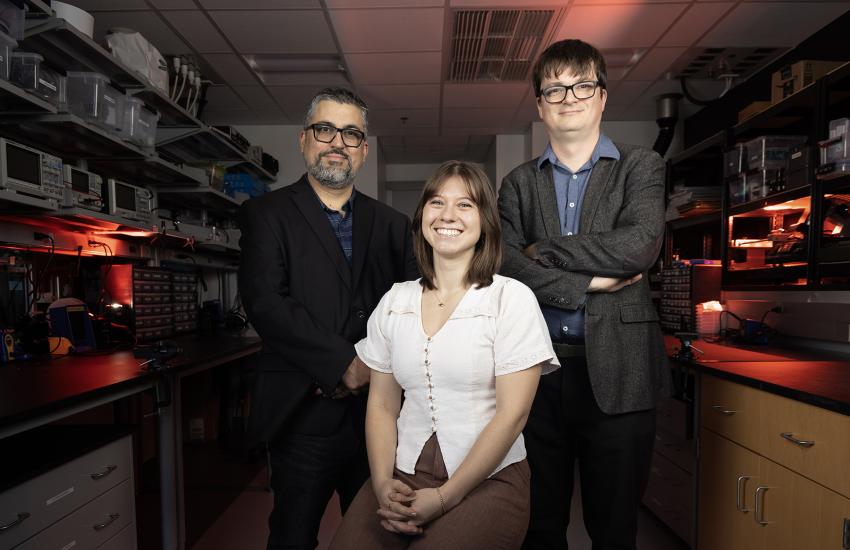

Pupil-Light is being developed by Carolina Instruments, a startup created by two UNC-Chapel Hill professors, Jose Rodríguez-Romaguera and Nicolas Christian Pégard, along with their former student, Ellora McTaggart, who now serves as the company CEO.

Traditionally, scientists study neuropsychiatric conditions such as attention-deficit/hyperactivity disorder (ADHD), depression, anxiety or post-traumatic stress disorder (PTSD) by observing visible changes in behavior. But shifts in pupil size and eye motion often appear first, the article explains. As people enter heightened or reduced states of arousal—alertness, readiness or excitement—their pupils respond in ways that reflect neuronal signaling in key brain regions. These signals often precede observable behavioral changes.

So far, testing has been done mostly with mice, but the researchers expect to begin testing with humans soon. While it is common for researchers to try to shrink the size of a prototype, the Carolina Instruments team faces a different challenge. “We started out in preclinical research, so we developed a version for mice. We kind of have a reverse problem that a lot of people have when they’re developing tech like this, where we’re actually trying to make it bigger right now for humans,” McTaggart reported to SIGNAL Media during a Microsoft Teams interview with all three researchers.

Rodríguez-Romaguera explained that neurotransmitters sprinkled throughout the brain affect both the eyes and behavior. “It’s a strong neuroscience-based approach. I want to say that it’s like we’re trying to understand pupillary dynamics, eye movements, heart rate and respiration at the same time, but in freely moving mice. How can we use all this multidimensional data to infer brain states? That’s what we’re doing in mice.”

Few environments test the human nervous system like combat. Stress spikes suddenly. Cognitive load fluctuates wildly. Sleep loss is constant. Decision‑making under pressure is life‑or‑death. The researchers believe the military could benefit from next-generation pupil‑based brain monitoring. Rodríguez‑Romaguera, a neuroscientist studying fear and anxiety, put it bluntly: “Heart rate and respiration aren’t enough. Pupil dynamics give you fast‑paced information about what exactly is going on right now in front of a soldier.”

Imagine a soldier on a long mission whose cognitive performance begins to degrade. The system could potentially detect information that researchers associate with fatigue and cognitive state. “We’re actively collaborating with Dr. Graham Diering, a sleep expert, to study if pupil dynamics correlate with sleep need,” Rodríguez‑Romaguera explained. “If you could measure how their sleep need is increasing, you could have an idea when they risk making the wrong decision.”

The team also says their technology may help predict physiological changes associated with anxiety and stress responses. “One of my biggest inspirations is being able to analyze patterns associated stress, including individuals with PTSD.”

Just as important, commanders could gain real‑time insights into attention and performance in demanding environments. Whether or not soldiers themselves should see their readings is still an open question, depending on the use case. “If they know their state, that might affect how they act,” Rodríguez‑Romaguera noted. “You may want somebody back in HQ watching their status, as they already do with many wearables.”

The glasses also offer another military‑focused advantage: extremely compact, extremely fast eye tracking that could integrate directly into helmets and extended reality (XR) training headsets. “It’s a very practical small‑form‑factor eye tracker,” said Pégard, co‑inventor and optics expert. “If you needed to integrate an eye tracker for optimizing displays, training or simulation systems, this could do it.”

The pupil is more than a simple aperture for light. It expands and contracts under the influence of major neuromodulators—including norepinephrine and dopamine—that orchestrate brain state.

Rodríguez‑Romaguera likened these chemicals to a liquid medium that brain processes swim in: “The minute norepinephrine is pumping through the brain, we should be able to see that because it’s such a broad brain‑state response.”

Changes in these neurochemical rhythms unfold in milliseconds. Capturing them requires sampling eye position and pupil size at extraordinary speed. Existing XR devices achieve about 200 samples per second; Pupil-Light offers 2,000. “There’s a lot of dynamics that happen beyond 200 hertz,” McTaggart said. “The velocity of the eyes, the acceleration of the eyes—these carry important information.”

And unlike camera‑based systems, their approach sidesteps the computational cost of processing hundreds of images per second. That efficiency translates into lower power usage, faster onboard processing and easier integration with commercial headsets. “If you want to do eye tracking these days, you need a calibrated machine in a room. It’s very impractical. The more you can make recordings in an environment that’s natural for a person in their daily life the more valuable the data becomes.”

When asked whether the glasses might help with neurological conditions such as multiple sclerosis and Amyotrophic Lateral Sclerosis, also known as ALS or Lou Gehrig’s Disease, the researchers suggested it’s possible but too soon to tell. “Any disorder that causes broad disturbances we may be able to pick up, but those are all ‘ifs’. Do we have a lot of dreams and hopes for all the disorders? Sure we do,” Rodríguez-Romaguera said.

McTaggart added that science has already correlated characteristics of the pupils across a range of neurological conditions. “There are a number of diseases and neurological conditions that have been studied in relation to eye tracking,” McTaggart said. “Parkinson’s, Alzheimer’s, autism—there’s a lot of research around that—PTSD and traumatic brain injuries as well.”

They pointed out that the academic‑to‑startup transition has reshaped roles. McTaggart, formerly the lab’s manager, now leads business strategy and investor relations. The professors offer guidance but keep their distance.

“Our current position is to stay out of the way,” Rodríguez‑Romaguera joked. “The mentoring relationship flipped. Now we’re learning from her.”

The startup company has received a $400,000 Small Business Technology Transfer grant from the National Institutes of Health. The UNC laboratory received separate funding from the National Institute of Child Health and Human Development and from multiple foundations.

The Carolina Instruments startup has just recently begun fundraising.

Next, the team intends to target the XR market. “If we can integrate into headsets that are much more broadly accessible, then that expands the opportunity and the use cases,” McTaggart offered. “We have this long-term vision of the clinical and neuroscience applications, but we also recognize that there are a number of different areas in simulation training and different XR environments where eye tracking itself has a lot of value. Really high-speed eye tracking in headsets that are more accessible, that we don’t have to ship and manufacture ourselves, has a lot of value for reaching out beyond our own company focus.”

Pégard emphasized the benefits the new technology’s speed factor presents for XR environments. “For instance, in a headset, one of the difficulties is to display the image fast enough that you can get a real-time experience. If you turn your head, the image needs to move with you, but that’s a lot of data. If you can track the eyes and see where the person is looking, you can prioritize the reconstruction of the things that matter the same way when you’re observing a scene,” he said.

Asked how soon the technology could transition to the marketplace, McTaggart said it all depends on the funding. “Ideally, within the next two years we’ll have some human validation data out on the market, but of course it’s very dependent on how our fundraising goes.”

Comments